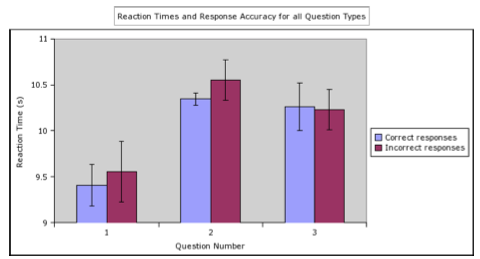

From Discussions VOL. 2 NO. 1Individualized Behavioral and Image Analysis of Response Time, Accuracy, and Social Cognitive Load During Social Judgments in AdolescentsData and Image AcquisitionData for this study was acquired using a 3T Siemens Allegra MRI scanner located at the Cleveland Clinic Foundation. The video paradigm was presented via back-projection and a mirror was fixed to the headcoil in the scanner and was in line with the participants' field of vision. The trigger for the video paradigm was coordinated with data acquisition from the fMRI scanner through MATLABã software. The behavioral responses captured using the 5DT virtual reality gloves were coded and were reviewed by an independent member of the study at a later date. The imaging protocol consisted of the following: Anatomic scans consisted of 3D Whole brain T1:T1 -weighted inversion recovery turboflash (MPRAGE), 120 axial slices, thickness 1.2mm, Field-of-view (FOV) 256mm x 256mm , TI/TE/TR =1900/1.71/900ms, flip angle (FA) 80o, matrix 256 x 128, receiver bandwidth (BW) = 32kHz. Imaging for the fMRI activation study: 101 EPI volumes of 32 interleaved axial slices were acquired using a prospective motion-controlled, gradient recalled echo, echoplanar acquisition (Thesen et al., 2000) with TE/TR/flip=39ms/2s/90o, matrix=64x64, 256mm x 256mm FOV; BW = 125 kHz. Imaging Data AnalysisImaging data were processed and analyzed using Statistical Parametric Mapping (SPM5) software developed by the Wellcome Department of Imaging Neuroscience at the University College in London (http://www.fil.ion.ucl.ac.uk/spm/software/spm5). Preprocessing analysis of the data included: slice timing correction, realignment and unwarping, co-registration, normalization to the MNI T1 template (Montreal Neurological Institute), and smoothing with a 6 mm isotropic Gaussian kernel. These preprocessing steps allow SPM to combine the functional and structural imaging data into a single image for analysis. Realigning takes all of the separate imaging files and puts them into the software program in the order in which they were taken. This process was completed for the structural imaging scans and the functional imaging scans separately. Another preprocessing step, spatial normalization, takes into account the normal variation in individuals head size. The images were then smoothed to accommodate differences in individual brain structures and prospective motion correction was used as a primary method to deal with participant movement within the scanner. Following this, grey-matter segmented and smoothed MPRAGE images were combined with mean EPI images averaged across subjects to create a single union image. Individual subject data were analyzed in a fixed effects model with the Canonical HRF as basis functions. The six realignment parameters (3 rotations and 3translations) were included as repressors. SPM identifies functionally activated coordinates and indicates cluster size. Significant cluster size was defined as 54 voxels or greater. The completion of these tasks allowed for a clear overlay of the functional imaging data over the structural data and the identification of activation in pre-specified regions of interest (Frackowiak, Friston, Frith, Dolan, Price, Zeki, Ashburner, & Penny, 2004). Standardized Group Imaging AnalysisCiccia and colleagues (2006) previous analysis of the group data looked at the brain activation patterns that persisted across all study participants. To analyze the group data, a single reaction time decision point was identified each social judgment. The ten second time point was selected because a preliminary inspection of the behavioral data indicated many participants registered their decisions at the ten-second mark. This data was then looked at in two ways: as a group image (where the 10 second decision points for all of the participants were averaged together), or as individuals (each individual participant's activation using the same ten second marker as the decision point). Activation patterns where then compared according to question difficulty to discover which brain areas are activated by different levels of social judgments (Ciccia, 2006). Individual Imaging AnalysisThe image analysis for the present study considered each participants brain activation patterns rather than grouped results as discussed by Ciccia and colleagues (2006). Although many of the decision points fell on the ten second mark, the actual variability in reaction times varied from in from 7.85 seconds to 12.05 seconds. The exact second of response was pinpointed from the behavioral glove response data. This temporal information was used to individualize the functional imaging analysis in SPM. These scans were then divided up into groups that correlated to question difficulty (i.e. all of the response times for the firsts question, 'Is X interested?' were put into one group, etc.) and these groups were compared against each other in order to find to activation effects that were specific to each question set. In other words, by subtracting out the activations caused by the simplest question (Is X interested?) from the activations caused by the most difficult question (Does Y think that X gets the meaning?) the areas of the brain used solely for the most difficult question become highlighted. The subtraction method allows researchers to see what brain areas are activated as adolescents are faced with more challenging social judgments. The results from the customized individual analysis completed for this study were then compared to the individual analysis previously conducted by Ciccia and colleagues (2006) that used a standardized 10 second decision time. Behavioral Data AnalysisThe behavioral data analysis focused on the responses that the participants made while answering the social questions during the functional imaging protocol. The laser gloves allowed the computer software (MATLAB) to register each response as yes or no and to register the reaction time for each decision. Data for reaction time and yes/no responses were organized by subject, question type, block order, and accuracy in a way that was appropriate for statistical analysis. Researchers in the lab identified correct and incorrect answers based on agreed upon criteria. Each participant's responses were then coded as correct or incorrect and the data was entered into SPSSã statistical software (http:// www.spss.com) to complete an analysis of the specific aims of this study which included: 1) the relationship of reaction time to response accuracy (correct and incorrect answers); 2) the relationship between reaction time and question type; 3) the relationship between accuracy and question type; 3)and the interactions between accuracy, question type, and reaction time. RESULTSBehavioral DataIt was first determined that all participants completed all three blocks. Only one participant failed to make social judgments (i.e. not giving a response when prompted with the questions) during the paradigm. This same participant also remained undecided on four responses (by moving both hands in response to a question, thus answering both yes and no). Since these six responses all occurred for the same participant and during the same block, results from that block were discarded. It was also noted that for the last participant response in each of the blocks was not collected by MATLAB. This left only fourteen question responses per block for analysis, resulting in a loss of twenty four responses of questions of type three. The information gleaned from the behavioral response data (reaction time, accuracy, and question type) were run though SPSS in order to determine main effects and the interaction effects these three variables. Accuracy vs. Reaction Time vs. Question TypeThe relationships between these three variables are very complex. These relationships are illustrated in Figure 3. The first thing to note is that the reaction time of all question one responses (both correct and incorrect) have a faster reaction time then questions of type two and type three. The response times of questions of type three appear slightly faster then those of type two. The second thing to note is that the reaction times of the correct responses are faster then the reaction times for incorrect responses. It is also of interest to note that there seems to be more variation in reaction times for incorrect answers as compared to correct answers. A relationship that is not represented in this figure but what was clear in the data is that there were more correct responses then incorrect responses. Overall, there were 243 correct answers, 87 incorrect answers, and six undecided or null responses. Of these, questions of type one had the most correct responses, followed by questions of type two followed by questions of type three. Questions of type two had the most incorrect responses, with the number of incorrect responses being roughly the same for questions of type one and questions of type three. A MANOVA was run with all of the variables to determine the relationships between reaction time, accuracy, and question type. This test determined that there was a significant main effect for the relationship between these three variables (p = .000 for all variables). Additional post-hoc testing and testing of between subject effects revealed additional information about the nature of these relationships, which are discussed further in this section. Figure 3: This figure shows the interaction between response time and accuracy across all question blocks. This data demonstrates that Questions of type one had a much faster reaction time then questions of type two and type three. Questions of type two had the slowest response followed closely by questions of type three. This data also shows that correct answers have a faster reaction time then incorrect answers.

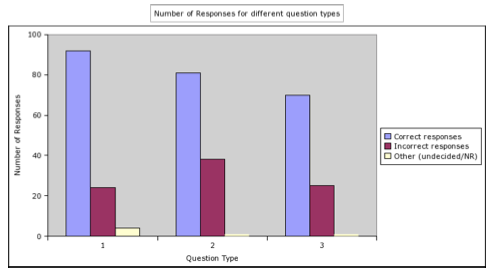

Accuracy vs. Question TypeOverall, there were 92 correct responses, 24 incorrect responses, and four null or undecided responses for questions of type one. There were 81 correct, 38 incorrect, and one null or undecided response for questions of type two, and there were 70 correct, 25 incorrect, and one null or undecided response for question three (not including the 24 missing question three responses). This data can be seen in Figure 4. Overall, it does not appear that question type has any real effect on accuracy. Questions of type one had the most correct responses, followed by questions of type two and then types three. Using SPSS, it was determined that that the relationship between accuracy and question type is not statistically significant. This was true across all question types (Tukey HSD P = .897, .897, and 1.000; LSD p = .661, .661, and 1.000 for Q1 Q2, Q3, and Q1, Q2, Q3 respectively). Figure 4: This figure shows the number of correct and incorrect responses per question type. These results demonstrate that correct responses were much more prevalent then incorrect responses. "Other" responses include answers where the participants or replied with both 'yes' and 'no' responses. The data indicated that questions that required a low social cognitive load had more correct answers then questions which required a higher social cognitive load. This data is skewed by the missing data in two of the question sets.

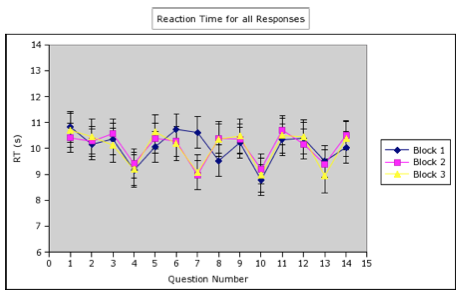

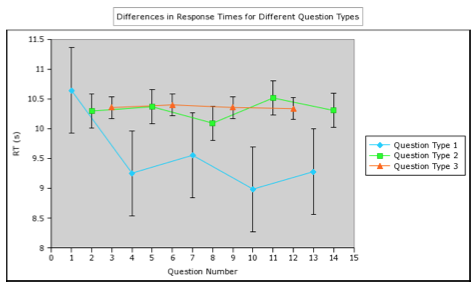

Question Type vs. Response TimeThe mean reaction time for each block was 10 seconds (10.05s ± 0.6s, 10.07s ± 0.57s, and 10.03s ± 0.67s for blocks 1, 2, and 3 respectively) and the overall response time was 10.05s ± 0.60s. This gives credence to the decision to use 10 seconds as an average response time for the analysis previously conducted by Ciccia and colleagues (2006). It is also important to note that the response times did not change over the course of the study. Having the same response time over all three blocks indicates that the participants were not getting faster or slower as the study progressed. Separating the responses by question type, it becomes clear that reaction time varies by changes in social cognitive load. Specifically, the data indicates that question type ones had a faster reaction time then either questions two or three. As Figure 5 indicates, this difference in reaction time seems to be restricted to question one versus question types two and three, and there does not appear to be any difference in reaction time between questions of type two and type three. The mean reaction time for question type ones over all blocks is 9.54s ± 0.71s. This is appreciably lower then the mean reactions times of questions of type two and three (10.32s ± 0.29s and 10.36s ± 0.18s respectively) and could mean that the actual time of response for questions of type one could be located in the scan before the one that was looked at in the group data. The differences in reaction times can be clearly seen in Figure 6. It is also of note in this figure that the first questions asked in each block have a slower reaction time then any other question type one in the study. In fact, the reaction time of this first question is often higher then those of the other question types. The relationship between question type and response time was determined in post-hoc testing. It was determined that there was a significant difference (p = .000) for Question 1 as compared to questions two and questions three. No significant difference was found between response times for questions two and three (Tukey HSD P = .951, LSD P = .766). Figure 5: This graph shows the average reaction times for questions during the three blocks. This data indicates that, on average, questions of type one had a faster reaction time. This was true across all blocks. This data also indicates that questions of type two and type three similar reaction times.

Figure 6: This figure shows the response times for different question types. Questions of type one (representing questions requiring the lowest social cognitive load) had the fastest response time of any of the questions. Questions of type two and of type three (representing moderate and high social cognitive load) have about the same reaction times. Suggested Reading from Inquiries Journal

Inquiries Journal provides undergraduate and graduate students around the world a platform for the wide dissemination of academic work over a range of core disciplines. Representing the work of students from hundreds of institutions around the globe, Inquiries Journal's large database of academic articles is completely free. Learn more | Blog | Submit Latest in Psychology |